Meta (META) CEO Mark Zuckerberg showcased impressive upgrades to the company's hit Ray-Ban Meta Glasses during the company's Meta Connect event on Wednesday, including the ability to translate live conversations, offer continuous talking features, and recognize the world around you. The updates make the Ray-Ban Meta Glasses, which start at $299, a far more intelligent piece of technology — and give them similar features to smartphone-based AI apps like Google's (GOOG, GOOGL) Circle to Search and Gemini and Apple's (AAPL) upcoming improved Siri and visual intelligence.

According to Meta, you'll now be able to do things like ask the glasses to remember where you parked. Or, if you want to know about landmarks in a city you're visiting, the Ray-Ban Meta Glasses can tell you more about a statue, building, or location when asked. At the grocery store and want to know what to make for dinner? Meta says the glasses can suggest what you should make based on what you're looking at while walking the aisles.

The company also says it's adding real-time translation to the Ray-Ban Meta Glasses. Zuckerberg showed off the feature during a live demo. And while there was a small delay between when a person stopped talking and the glasses began translating, it certainly appeared to work well. In fact, live-translating a conversation with the glasses looks far more natural than doing the same thing while holding up a smartphone.

Finally, Meta showed off a new limited-edition transparent design for the Ray-Ban Meta Glasses that lets you see the device's internal components. It gives the glasses a late-90s/early-2000s look that more tech companies should adopt. (Says the guy who grew up during that time.)

Meta's second-gen Ray-Bans are getting improved AI services soon. In a year of promising AI gadgets, Meta's second-gen Ray-Ban smart glasses ended up being one of the best, and something I kept on my face for far longer than I ever expected. They've been an unexpected hit for Meta, too, and a vehicle for exploring some camera-enabled AI features that can analyze the real world on the fly. At Meta's Connect conference, a show dedicated to VR, AR and a lot of AI, Meta announced new updates Wednesday for the glasses, though no new hardware. Instead, last year's versions will see new features such as live translation, camera recognition of QR codes and phone numbers, and support for AI analysis of live video recording. I got to check out just a few of those features at a press event before the announcement, using a pair of Ray-Bans perched over my own glasses (I didn't bring contact lenses).

The demos I walked through showed how I could look at a QR code with my glasses and then automatically open the linked website on my phone after my glasses snapped a photo. I looked at a model of a street full of toy cars and asked the glasses how they could describe the cars in front of me, and a photo was snapped without me having to say the usual “look” trigger word. I also tried deeper music control in the glasses, making specific music requests for tracks in Spotify (a feature that's also coming with support for Apple Music and Amazon Music). All of these features will work on iOS and Android using the Meta View app.

I'm most interested in the live translation feature, and how quickly it might respond to actual conversations. I also wonder how AI assistance with recorded video clips might work, too. At some point, AI-assisted glasses might have a much more continuous awareness of my world with cameras. But taking photos and videos also drains battery life, something the Meta Ray-Bans already struggle with over the course of a full day. According to Meta, battery life improvements are also in the works, which I'd love to see, although I'm not sure how it'll do it.

My demos did have a few connection hiccups, too, something I've experienced at times using Meta Ray-Bans connected to my iPhone. For example, sometimes voice requests will hang for music playlist playback in a way that Siri requests don't with AirPods. Regardless, expect the Meta Ray-Bans to keep getting better. That's good news for anyone who already has a pair, although I wonder when Meta will make strides towards a third-gen version -- maybe next year.

Meta says it’s working to make it easier for mobile developers to make the shift to Meta’s Horizon OS.

Meta CEO Mark Zuckerberg announced updates to the company’s Ray-Ban Meta smart glasses at Meta Connect 2024 on Wednesday. Meta continued to make the case that smart glasses can be the next big consumer device, announcing some new AI capabilities and familiar features from smartphones coming to Ray-Ban Meta later this year. Some of Meta’s new features include real-time AI video processing and live language translation. Other announcements — like QR code scanning, reminders, and integrations with iHeartRadio and Audible — seem to give Ray-Ban Meta users the features from their smartphones that they already know and love.

Meta says its smart glasses will soon have real-time AI video capabilities, meaning you can ask the Ray-Ban Meta glasses questions about what you’re seeing in front of you, and Meta AI will verbally answer you in real time. Currently, the Ray-Ban Meta glasses can only take a picture and describe that to you or answer questions about it, but the video upgrade should make the experience more natural, in theory at least. These multimodal features are slated to come later this year.

In a demo, users could ask Ray-Ban Meta questions about a meal they were cooking, or city scenes taking place in front of them. The real-time video capabilities mean that Meta’s AI should be able to process live action and respond in an audible way. This is easier said than done, however, and we’ll have to see how fast and seamless the feature is in practice. We’ve seen demonstrations of these real-time AI video capabilities from Google and OpenAI, but Meta would be the first to launch such features in a consumer product.

Zuckerberg also announced live language translation for Ray-Ban Meta. English-speaking users can talk to someone speaking French, Italian, or Spanish, and their Ray-Ban Meta glasses should be able to translate what the other person is saying into their language of choice. Meta says this feature is coming later this year and will include more language later on.

The Ray-Ban Meta glasses are getting reminders, which will allow people to ask Meta AI to remind them about things they look at through the smart glasses. In a demo, a user asked their Ray-Ban Meta glasses to remember a jacket they were looking at so they could share the image with a friend later on.

Meta announced that integrations with Amazon Music, Audible, and iHeart are coming to its smart glasses. This should make it easier for people to listen to music on their streaming service of choice using the glasses’ built-in speakers.

The Ray-Ban Meta glasses will also gain the ability to scan QR codes or phone numbers from the glasses. Users can ask the glasses to scan something, and the QR code will immediately open on the person’s phone with no further action required.

The smart glasses will also be available in a range of new Transitions lenses, which respond to ultraviolet light to adjust to the brightness of the room you’re in.

Since we first launched our Ray-Ban Meta glasses, they’ve been so popular that we’ve had trouble keeping them on the shelves until recently. People have shared millions of moments with friends and family since we first introduced them.

Whether you’re exploring a new city, sitting on the sidelines of a sporting event, or simply trying to be more present in your daily life, Ray-Ban Meta glasses are the perfect companion to help you experience the world, share your perspective and capture moments, completely hands-free. And now we’re adding new AI features, expanding partnerships and more.

First, we’re making it easier to have a conversation with Meta AI. Kick off your conversation with “Hey Meta” to ask your initial question and then you can ask follow-up questions without saying “Hey Meta” again. And you no longer need to say “look and” to ask Meta AI questions about what you’re looking at.

We’re adding the ability for your glasses to help you remember things. Next time you fly somewhere, you don’t have to sweat forgetting where you parked at the airport — your glasses can remember your spot in long-term parking for you. And you can use your voice to set a reminder to text your mom in three hours when you land safely.

You can now ask Meta AI to record and send voice messages on WhatsApp and Messenger while staying present. This comes in especially handy when your hands are full or when you can’t get to your phone easily to write out a text.

We’re adding video to Meta AI, so you can get continuous real-time help. If you’re exploring a new city, you can ask Meta AI to tag along, and then ask it about landmarks you see as you walk or get ideas for what to see next — creating your own walking tour hands-free. Or, if you’re at the grocery store and trying to plan a meal, you can ask Meta AI to help you figure out what to make based on what you’re seeing as you walk down the aisles, and if that sauce you’re holding will pair well with that recipe it just suggested.

Soon, your glasses will be able to translate speech in real time. When you’re talking to someone speaking Spanish, French or Italian, you’ll hear what they say in English through the glasses’ open-ear speakers. Not only is this great for traveling, it should help break down language barriers and bring people closer together. We plan to add support for more languages in the future to make this feature even more useful.

We’re partnering with Be My Eyes, a free app that connects blind and low-vision people with sighted volunteers so they can talk you through what’s in front of you. Thanks to the glasses and POV video calling, the volunteer can easily see your point of view and tell you about your surroundings or give you some real-time, hands-free assistance with everyday tasks, like adjusting the thermostat or sorting and reading mail.

In addition, we’re advancing our integrations with Spotify and Amazon Music, and adding new partnerships with Audible and iHeart. You can use your voice to search, discover and play content on the go. Ask to play by song, artist, album, or audiobook. And you can get more information about the content your glasses are playing (“Hey Meta, what album is this from?”).

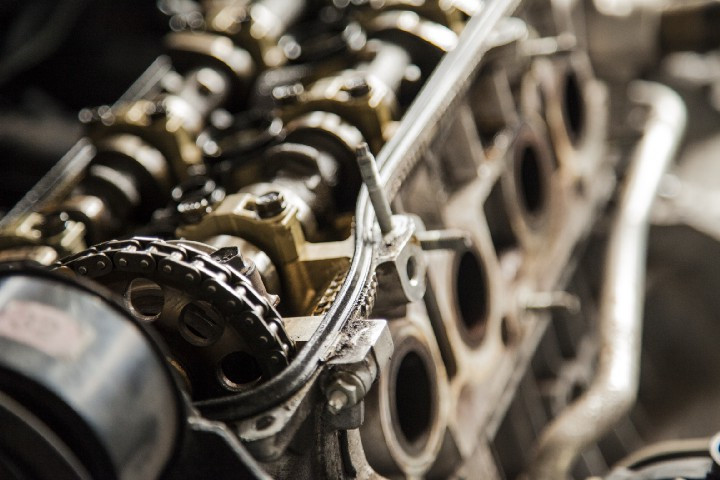

We’re dropping a new, limited edition set of Shiny Transparent Wayfarer frames so you can show off the tech inside them. This special edition embraces the essence of innovation through transparency, leaning heavily into craftsmanship, design and ingenuity. And we’re bringing EssilorLuxottica’s new range of UltraTransitions® GEN S™ lenses to the Ray-Ban Meta collection, giving you even more options to adapt quickly in all light conditions.

Learn more at meta.com/smart-glasses.